The Testing Illusion: Why Your QA Process Is Lying to You About Agent Reliability

You shipped the agent.

It passed every test you wrote.

Coverage looked solid. The demo worked great.

Then production happened.

Within two weeks, your "battle-tested" agent started selecting the wrong visualization types for time-series data. API calls sprouted random parameters that weren't there before. Multi-turn conversations collapsed after four exchanges, forcing users to repeat themselves like they were talking to a goldfish.

Nothing in your codebase changed. The APIs didn't change. Your tests still pass.

But something is deeply broken.

And your entire QA process failed to warn you.

The Evaluation Blindspot

Here's the uncomfortable truth most builders haven't confronted yet: traditional testing methodologies are structurally incapable of catching the failure modes that actually kill agentic systems in production.

This isn't a minor gap. It's a fundamental mismatch between how we've tested software for decades and how autonomous AI systems actually fail.

Research on unorchestrated multi-agent systems shows failure rates between 41% and 86.7% in production environments [1]. These aren't edge cases. They're the norm. And the failures aren't simple inaccuracies—they're structural breakdowns in coordination, infinite recursive loops, and what researchers call "Hallucination Cascades" where a minor upstream error amplifies into catastrophic downstream collapse [1].

The old testing playbook—unit tests, integration tests, code coverage—was built for deterministic systems. Given the same inputs, you get the same outputs. Run the test suite, hit 90% coverage, feel confident.

Agentic systems demolish that assumption.

An LLM-based agent calling a weather API might work perfectly on Tuesday. On Wednesday, given the identical query, it attempts to call a "get_precipitation" endpoint that doesn't exist. Your code didn't change. Your API didn't change. The agent's reasoning pathway shifted due to sampling stochasticity, subtle prompt variations from memory state, or emergent behavior from tool-use chain-of-thought [7][1].

This is what I call The Evaluation Blindspot: the dangerous gap between what your tests measure and what actually determines whether your agent survives contact with real users.

And until you close it, you're shipping on faith.

The Illusion of Coverage

Let me narrow the target here, because I don't want you walking away thinking tests are worthless.

Unit tests for your deterministic substrate—the scaffolding around your agent, the API handlers, the data transformations—those still matter. Write them. Keep them green.

But unit tests for "reasoning quality"? That's mostly theater.

Code coverage measures which lines of deterministic code execute during testing. For an agentic system, the critical quality determinants—prompt construction, tool selection reasoning, multi-step planning coherence—exist outside the codebase entirely. You can achieve 100% code coverage while your agent hallucinates tool names that don't exist, constructs syntactically valid but semantically nonsensical API parameters, and enters infinite loops through repetitive tool calls that make no progress toward user goals [8].

The Berkeley Function Calling Leaderboard documents this quantitatively. Even GPT-4-class models exhibit non-zero Parameter Hallucination Rates—incorrect parameter names that slip through—and Parameter Missing Rates where required fields get dropped [9][19]. Your unit test asserting "can the agent call this API" provides zero guarantee it will do so correctly under production distribution.

Ask me how I know. Nothing like watching a 3am tool-loop to humble your coverage dashboard.

Understanding Stochastic Drift

If The Evaluation Blindspot describes the static problem, Stochastic Drift describes why it gets worse over time.

Drift means identical inputs produce not just different outputs, but systematically degrading failure modes as the weeks roll by. Unlike traditional bugs—which are deterministic and reproducible—drift manifests across four dimensions that compound multiplicatively [10][11]:

Reasoning Drift: Your agent's internal planning process generates different action sequences for the same user intent. The agent that previously decomposed "book a flight to Paris" into [search_flights → compare_prices → select_option] might later attempt [check_weather → search_flights → search_hotels], introducing unnecessary latency and potential failure points.

Retrieval Drift: Vector embeddings, chunking strategies, or index health degrades quietly. Your agent answering "what is our refund policy?" retrieves shipping policy documents instead. Confidently wrong, grounded in irrelevant source material.

Tool Drift: External APIs evolve—adding parameters, deprecating endpoints, changing response schemas—without corresponding updates to your agent's tool-use prompts. The agent keeps calling outdated interfaces, accumulating silent failures that erode task completion rates.

Here's what makes this deadly: an agent experiencing retrieval drift (wrong context) while simultaneously exhibiting reasoning drift (suboptimal planning) produces outputs that are technically "successful"—they complete without errors—but substantively wrong in ways traditional testing cannot detect [11].

Your tests stay green while your users grow frustrated.

A Different Frame for Quality

"You can't manage what you can't measure." — Peter Drucker

But here's the inversion for agentic systems: you can't measure what you refuse to observe.

The paradigm shift required here isn't incremental. It's not "better unit tests" or "more coverage." It's a fundamental move from output validation to process traceability, from deterministic assertions to stochastic drift monitoring, from isolated component testing to multi-agent coordination metrics [2][3].

You need to watch your agent think. You need to evaluate the reasoning pathway, not just the final answer.

An agent that produces the right answer through flawed reasoning will fail on the next semantically similar query. It got lucky. And luck doesn't scale.

The organizations that crack this report 30-40% reductions in hallucinations and 25% improvements in model reliability [5][6]. First-year ROI ranges from 3-6x. But achieving those outcomes demands investment in gold dataset curation, LLM-as-a-Judge architectures that account for systematic biases, and production observability systems that catch drift before it compounds into system failure [5][6].

Let me show you how to build this.

The Agentic Evaluation Protocol: Five Pillars

Pillar 1: Trace Everything (Reasoning Traceability)

If you can't replay exactly what your agent did and why, you can't evaluate it. Period.

This means structured logging across every span of agent execution. Not just inputs and outputs—the intermediate reasoning steps, tool selections, parameter constructions, and state transitions between turns. When integrated properly, you can expose chain spans (high-level workflow orchestration), retriever spans (document retrieval with relevance scores), LLM spans (model invocations with prompts and completions), and tool spans (external API calls with request/response payloads) [14][15].

Arize Phoenix implements these concepts through distributed tracing, capturing latency, token counts, and semantic coherence at each span. This transforms debugging from "the agent gave a wrong answer" to "the agent selected the correct tool but constructed parameters with 0.42 semantic similarity to the intended query, causing the search to return irrelevant results" [14].

Minimum Ship This Week: Log tool name + args + tool outputs + final answer for every agent interaction.

Upgrade Later: Add span IDs, replay capability, and semantic divergence detection across sessions.

Pillar 2: Evaluate the Chain, Not Just the Output (Tool-Use Accuracy)

Tool-calling has emerged as the dominant production challenge for agentic systems, surpassing reasoning capability in importance according to engineering surveys. And the numbers are brutal: 85% of realistic agent tasks require composing at least three tools sequentially, with 20% demanding seven or more tool calls [16][17].

Each invocation introduces multiple failure modes. Tool selection errors—choosing a plausible but incorrect API. Parameter hallucination—inventing parameter names or values not in the schema. Parameter omission—failing to populate required fields. And brittle error recovery—halting or returning generic messages when a call fails instead of adjusting and retrying [9].

Scale AI's ToolComp benchmark reveals the compositional challenge starkly: accuracy degrades as tool chain length increases, with most models below 40% success rate on 7+ tool sequences [16]. Your agent might pass "can it call one API" tests while failing catastrophically on real workflows.

Minimum Ship This Week: Track tool call sequences per interaction and flag any session with 3+ consecutive identical tool calls (potential loop indicator).

Upgrade Later: Implement semantic similarity checks between tool call outputs and expected results, auto-flag sequences where tool outputs don't advance toward stated goals.

Pillar 3: Set Hard Boundaries (Constraint Adherence)

If your evaluator is also probabilistic... what are you actually trusting?

Agents operate under multiple constraint regimes simultaneously: safety guardrails, policy compliance, business logic, resource limits. Constraint adherence evaluation assesses whether agents respect these boundaries under pressure.

Microsoft's red-team testing documented something particularly insidious: embedding instructions in an agent's memory that cause it to ignore safety guidelines in subsequent interactions. In initial testing, this attack succeeded in 40% of cases when the agent failed to check its memory before responding. After modifying the system prompt to encourage memory consultation, the attack success rate rose to 80%—demonstrating that naive guardrail implementation can paradoxically increase vulnerability [20].

This is why "add more checks" isn't the answer. You need constraints that are structurally enforced, not just prompted.

Infinite loop detection matters here too. Autonomous agents can enter loops through repetitive tool calls that make no progress—what researchers call "Loop Drift" where agents misinterpret termination signals or generate repetitive actions despite explicit stop conditions [23][24]. Production systems need sliding window similarity checks that trigger hard terminations when semantic completion criteria remain unsatisfied after N attempts.

Minimum Ship This Week: Add a max-turns limit (start with 20) and a hard stop when any 5-action window shows >0.9 similarity between actions.

Upgrade Later: Implement multi-layer guardrails—fast regex patterns for PII detection, ML classifiers for toxicity and intent, LLM-based verification for complex policy adherence on high-stakes paths [46].

Pillar 4: Build Your Ground Truth (Gold Datasets)

You need a "single source of truth" against which agent behavior gets measured. But unlike traditional ML datasets focused on input-output pairs, agentic gold datasets must capture multi-turn conversational trajectories with state transitions, intermediate reasoning steps and tool call sequences, and success criteria that may be partially ordered across multiple valid solution paths [6].

Building these takes real investment:

Domain Alignment: Identify representative tasks spanning your agent's operational scope. Common queries, edge cases, and adversarial inputs.

Expert Curation: Subject matter experts craft canonical responses, annotating correct tool sequences, reasoning steps, and acceptable output variations. A "book a flight" task might have gold standard sequences for one-way, round-trip, and multi-city variants.

Synthetic Augmentation: Generate cases covering distribution tails that human curators miss—paraphrased queries, adversarial perturbations, cross-lingual variations if relevant, edge case amplification for rare but critical scenarios [26][27].

Organizations implementing gold dataset methodologies report 30-40% reductions in hallucinations [6]. But be warned: gold datasets decay over time as product capabilities evolve and user behavior shifts. You need DataOps pipelines that ingest production failures as candidate additions, run automated quality checks, trigger human-in-the-loop review for ambiguous cases, and version/deploy updated datasets to CI/CD evaluation gates.

Minimum Ship This Week: Write 20 adversarial test cases by hand—queries designed to trigger known failure modes in your specific domain.

Upgrade Later: Implement synthetic data generation with quality gates that filter low-fidelity outputs (expect 30-40% of initial synthetic data to need filtering or human correction) [26].

Pillar 5: Automate Judgment (LLM-as-a-Judge)

Manual evaluation doesn't scale to production volumes. Traditional metrics like BLEU or ROUGE correlate poorly with human judgment on open-ended generative tasks. LLM-as-a-Judge architectures employ stronger language models to evaluate weaker agents, automating quality assessment while approximating human preferences [30][31][32].

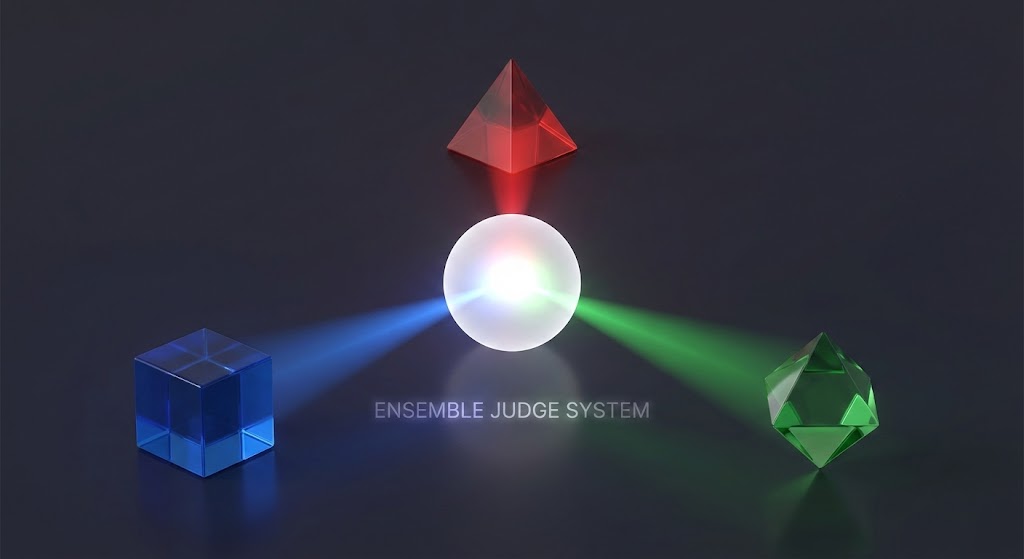

But here's what most people get wrong: LLM judges have severe, documented biases.

Research shows individual LLM judges achieve 96% True Positive Rate—correctly identifying valid outputs—but only 25% True Negative Rate for catching invalid outputs [33]. They're agreeable to a fault. This asymmetry causes systematic overestimation of agent quality because the judge rarely flags subtle errors. Position bias (favoring outputs appearing first), length bias (longer responses score higher independent of quality), and self-enhancement bias (rating outputs from their own model family higher) all compound the problem [33].

Recent work quantifies these effects: individual LLM judges show maximum errors of 17.6% on annotated outputs. Simple majority voting among ensemble judges reduces maximum error to 14.8%. Minority veto strategies—where a small subset can override the majority—reduce maximum error to 2.8%. Regression-based calibration using ground-truth labels achieves 1.2% maximum error [33].

One study on flawed reasoning traces showed the evaluator model achieved only 44.1% accuracy when attempting to identify flawed reasoning—barely better than random [7]. And in 45.2% of cases, the evaluator explicitly validated the flawed reasoning as sound. When asked to re-evaluate inputs they'd just incorrectly validated, the models reversed their judgment only 4.4% of the time [7].

This suggests that once a hallucination takes root in the context window, it resists self-correction. Simple "self-reflection" loops aren't enough.

Minimum Ship This Week: Implement basic LLM-as-a-Judge scoring on your gold dataset with a rubric covering helpfulness, accuracy, policy compliance, and tone.

Upgrade Later: Deploy ensemble judging with heterogeneous models (mix model families to diversify biases), establish human-in-the-loop validation on judge disagreements, and monitor judge-human agreement drift as production data evolves [37].

The Production Layer: The LOOP System

L - Log everything in production. Stream feature statistics, predictions, and interaction traces through real-time processing. You need this data to compute drift metrics [54].

O - Observe for drift across all four dimensions. Set statistical thresholds for reasoning drift (KL divergence on action distributions), retrieval drift (embedding distribution statistics), tool drift (API response changes, error rate spikes), and workflow drift (classifier accuracy distinguishing dev-time from production queries). Alert when divergence exceeds calibrated bounds [54][11].

O - Operate layered guardrails. Three-tier defense: fast rule-based validators (sub-10ms for regex patterns, blocklists, schema validation), ML classifiers (100-300ms for toxicity, bias, intent), and LLM-based verification (500ms-2s+ for complex policy adherence) [46]. Route by risk level—internal FAQs take the fast path, financial guidance takes the full stack.

P - Propagate learnings back. Build a continuous feedback loop between production logs and eval sets. Ingest failures as candidate gold dataset additions. Periodically sample agent outputs for human annotation to measure whether judge scores remain predictive of human preferences.

Effective HITL (human-in-the-loop) architectures target roughly 10-15% escalation rates—85-90% of tasks handled autonomously, with the lowest confidence or highest risk routed to humans [21]. This exception management model makes human oversight economically feasible while catching what automated systems miss.

The Economics You Can't Ignore

Before you ship any of this, understand the cost reality.

In high-stakes workflows, the "Cost of Quality" ratio—inference compute spent on evaluation versus execution—can reach 1:1 or even 2:1. For every token your worker agent generates, one or two tokens get generated by critic/verifier agents to check the work [2].

One study on a news-verification agent showed saturated retrieval and verification required approximately 200,000 tokens per article—higher than zero-shot generation but justified by eliminating hallucinations that would damage reputation [2]. For high-value tasks, the market is willing to pay a verification premium.

But you need to measure this. Production cost tracking should monitor token usage per interaction (input + output + tool calls), API call counts and rate limit utilization, infrastructure costs, and HITL escalation frequency [5].

Latency matters too. Simple queries should hit P50 under 500ms, P95 under 1 second. Complex workflows: P50 under 2 seconds, P95 under 4 seconds. Multi-agent orchestration: P50 under 3 seconds, P95 under 6 seconds. Voice AI agents demand P50 under 800ms for conversational flow [60]. Miss these targets and users bounce regardless of accuracy.

Organizations neglecting these optimizations report agent costs 5-10× initial projections, creating unsustainable unit economics that force deployment rollbacks despite technical success [5].

Who You're Becoming

Let me tell you what this is really about.

It's not about learning evaluation frameworks. It's not about adding more monitoring dashboards. It's not even about catching bugs before users do.

It's about becoming the builder who ships reliable agentic systems while everyone else ships demos that crumble in production.

Right now, 79% of organizations report implementing agentic AI, with 96% planning expansion in 2025 [4]. The frontier is getting crowded. But research suggests 40% of agentic initiatives fail to reach production [4]. The differentiator isn't agent capability—frontier models are commoditizing. The differentiator is evaluation rigor.

The engineers who build robust verification layers, who mathematically model agent state, who economically justify their verification loops—they're the ones building the next generation of autonomous AI that actually works.

You can be one of them. Or you can keep watching your coverage dashboard stay green while your agents silently degrade.

The choice compounds.

Ship something reliable this week.

Here's your checklist:

- Log traces (tool name + args + outputs + final answer)

- Write 20 adversarial cases specific to your domain

- Add 3 hard constraints (max turns, loop detection threshold, at least one safety guardrail)

That's your minimum viable evaluation system. Start there. Expand from the pillars. Build the LOOP for production.

Stop guessing. Start measuring.

— Ulver

Further Reading

For those tracking the research frontier, here are key benchmarks and frameworks mentioned:

- TRAIL (trace-based agent evaluation): 148 annotated execution traces with 800+ identified errors, testing context handling and tool failure recovery [19]

- AgentRewardBench: Evaluation framework for web agent trajectories [18]

- τ-Bench (tau-bench): Long-horizon task evaluation requiring domain-specific policy compliance [21]

- Berkeley Function Calling Leaderboard: Standardized measurement of tool-calling accuracy [19]

- ToolComp (Scale AI): Compositional tool-use benchmark revealing exponential accuracy degradation [16]

- SeqComm (Sequential Communication): Asynchronous coordination protocol using dynamic priority assignment to resolve circular dependencies in multi-agent systems [1]

- AgentGuard: Runtime verification framework using Markov Decision Processes for probabilistic safety bounds [1]

- First-Success/Best-of-N/Proactive Selection: Loop prevention patterns—round-robin until exit condition (First-Success), parallel execution with judge selection (Best-of-N), pre-routing to avoid multi-agent complexity (Proactive Selection) [1]

Sources

[1] https://galileo.ai/blog/multi-agent-ai-failures-prevention [2] https://validmind.com/blog/the-need-for-new-approaches-agentic-ai/ [3] https://blog.arcade.dev/agentic-framework-adoption-trends [4] https://www.multimodal.dev/post/agentic-ai-statistics [5] https://www.aviso.com/blog/how-to-evaluate-ai-agents-latency-cost-safety-roi [6] https://www.techment.com/blogs/golden-datasets-for-genai-testing/ [7] https://www.techaheadcorp.com/blog/agentic-ai-evaluation-ensuring-reliability-and-performance/ [8] https://arxiv.org/html/2505.02709v1 [9] https://blog.quotientai.co/evaluating-tool-calling-capabilities-in-large-language-models-a-literature-review/ [10] https://www.ibm.com/think/insights/agentic-drift-hidden-risk-degrades-ai-agent-performance [11] https://apml.substack.com/p/why-ai-agents-rot-the-4-hidden-drifts [14] https://arize.com/docs/phoenix/integrations/python/llamaindex [15] https://www.youtube.com/watch?v=iOGu7-HYm6s [16] https://scale.com/leaderboard/tool_use_enterprise [17] https://galileo.ai/blog/benchmarks-multi-agent-ai [18] https://www.concentrix.com/insights/blog/12-failure-patterns-of-agentic-ai-systems/ [19] https://gorilla.cs.berkeley.edu/leaderboard.html [20] https://cdn-dynmedia-1.microsoft.com/is/content/microsoftcorp/microsoft/final/en-us/microsoft-brand/documents/Taxonomy-of-Failure-Mode-in-Agentic-AI-Systems-Whitepaper.pdf [21] https://ainativedev.io/news/8-benchmarks-shaping-the-next-generation-of-ai-agents [23] https://explorer.invariantlabs.ai/docs/guardrails/loops/ [24] https://www.fixbrokenaiapps.com/blog/ai-agents-infinite-loops [26] https://www.evidentlyai.com/llm-guide/llm-test-dataset-synthetic-data [27] https://arize.com/resource/synthetic-data-generation-for-ai-agents/ [30] https://en.wikipedia.org/wiki/LLM-as-a-Judge [31] https://www.confident-ai.com/blog/why-llm-as-a-judge-is-the-best-llm-evaluation-method [32] https://www.evidentlyai.com/llm-guide/llm-as-a-judge [33] https://aicet.comp.nus.edu.sg/wp-content/uploads/2025/10/Beyond-Consensus-Mitigating-the-agreeableness-bias-in-LLM-judge-evaluations.pdf [37] https://proceedings.iclr.cc/paper_files/paper/2025/file/08dabd5345b37fffcbe335bd578b15a0-Paper-Conference.pdf [46] https://authoritypartners.com/insights/ai-agent-guardrails-production-guide-for-2026/ [54] https://www.adopt.ai/glossary/agent-drift-detection [60] https://arxiv.org/html/2507.21504v1